In September 2019, Mac Russell (MS4 at Usask) had the privilege to attend the 7th annual World Congress of Ultrasound in Medical Education (WCUME) in Irvine, California. Alongside Dr. Wayne Choi (co-founder of the scan-tracking app called EchoLog ), the two had the opportunity to present on the University of Saskatchewan’s experience with clinical ultrasound in undergraduate medical education. They presented on the importance of tracking scans as a means of tracking growth and informing assessment.

In this first post, we focus on why tracking scans is important. In part 2 we’ll dive into the logistics of using the Echo Log app!

BACKGROUND

At USASK, we have had an integrated clinical ultrasonography (aka Point of Care Ultrasound) curriculum in our undergraduate medical program since the fall of 2014. We start in pre-clerkship with 4 distinct modules over 2 years, with ~10 hours of directly supervised scanning with qualified instructors. POCUS skills are assessed with exam questioned and through OSCEs. We also have other scanning and learning opportunities including near-peer tutoring through our student-led ultrasound interest group, as well as regularly hosted POCUS courses (EDE, EGLS, etc..) and our annual conference (SASKSONO). Once in clerkship, given the distributed nature of our program (several sites throughout our province) and range in terms of expertise amongst faculty in various disciplines, there is variability in terms of supervision of scans. Furthermore, it should be note that at present, logging of clinical ultrasound scans is not a program requirement.

With that all said – one might ask: Why bother logging scans? Well, to borrow from the sport performance lingo – you can’t track what you don’t measure, and you can’t improve if you don’t know how you’re doing!

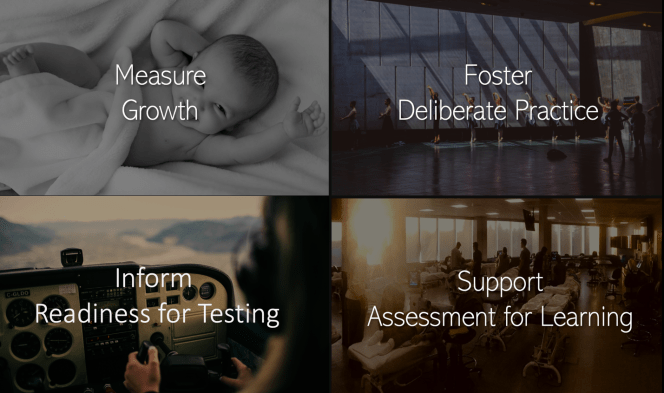

Measure Growth – a case study.

Take for example an EM shift with a clerk and EM physician. EMS brings in a patient from a car accident, and the physician asks the student to perform a FAST scan. The trainee can generate a subxiphoid view of the heart, but in the RUQ is having a hard time getting around the rib shadows and clearing the caudal tip of the liver. Now, this is your first time this physician is working with this student, and other than the struggles with this particular scan, the physician doesn’t really know anything about the trainee’s experience with POCUS. Is it because the trainee is really new to scanning? Or is the trainee doing poorly despite a lot of practice opportunities?

Essentially, we want to know if this trainee is progressing appropriately given his/her stage of training (if this conjures images of a Rourke growth chart or a Fitbit – you’re on the right track). There is some evidence that clinical ultrasound skills follow learning curves as well. A study by Blehar et al.1 showed that learning curves can be reasonably predicted for some applications of clinical ultrasound, like FAST and RUQ.

Now, it should be noted that the above graphs don’t look much like curves – but there’s a good reason why, with help from little red, we can make the case for what Dr. Ray Wiss dubbed the “double learning curve” of POCUS.

It would seem more appropriate for the graphs to look something like the one above – since generally speaking, a trainee must first be introduced to a scan before they can begin performing/practicing it! Recall, this study was based on recorded images – which trainee would only start doing AFTER a day long introduction to the scan. Such introductions can be quite intensive depending on the skills of the learner and nature of the scan(s) being taught (think of day-long workshops with several standardized patients to practice on) and so not surprisingly, trainees tend to come out with pretty solid technique (and certainly much better than when they started). As such, we see a two-part learning curving with the first curve being steep (intro session), followed by an apparent plateau in skills, and finally a 2nd flatter, final curve towards mastery. It is during the plateau (aka apprenticeship) that logging and spaced supervision is so critically important. There are many reasons why skills may seem to plateau or stop improving. This could be due to degradation of technique, or forgetting a key step, and these need to be corrected through ongoing supervision (which was the case in the above study). But their are also confounders (as mentioned by the authors) associated with gradually improving technique and confidence such as choosing to scan more challenging patients or environments resulting in an apparent lack of progress). All the more reason to ensure regular supervision with feedback – fostering deliberate practice (more on this below!).

This would seem to support the notion that most trainees improve with scan count, with approximately 50-100 encounters being a common threshold to achieve a reasonable level of performance for many applications. These learning curves show trajectory of skill acquisition, which can be applied to learners. By logging scans, it helps educators see whether a learner is developing their POCUS skills in an expected and appropriate level for their experience.

When we track scans, the benefits go beyond only measuring growth.

Fostering Deliberate Practice

A second reason to track scans relates to Deliberate Practice². You may recall that we mentioned that in clerkship supervision is variable, thus some trainee scans are supervised and others are unsupervised. When we log a supervised scan, it means a qualified instructor has signed off meaning there was an opportunity for the instructor to give real time feedback and teaching, one of the key components of deliberate practice. However, given the large number of trainees (all medical students at USASK!) it is also necessary for trainees to engage in additional practice that incorporates the feedback they have received. This deliberate practice happens when you perform unsupervised scans on your own, practicing the skill and technique you’ve been coached in. And trainees should keep track of that too!

At USask, they have developed a Clinical Ultrasound Elective in Clerkship (CUSEC), that students can apply to in their 4thyear. It is an innovative 2 week elective that starts with one week of intensive hands-on scanning and small-group based learning, followed by a second week where trainees integrate POCUS into clinical rotations such as emergency medicine, pediatrics, internal medicine or surgery. Due to limited space in the elective (12 students), there is an application process. This involves a submission of a “POCUS CV” that outlines students’ past experience with clinical ultrasound. Part of the required POCUS CV is a copy of a log of all the scans you’ve completed. So, if you want to build a strong POCUS CV, you need to log scans showing your commitment to both supervised and non-supervised practice. While regular practice is doing something over and over, deliberate practice is different in that it involves regular feedback and has specific goals.

Assessment for Learning

We saw how the POCUS CV can be used to demonstrate a trainee’s commitment to deliberate practice. The side we have not yet discussed is how logging scans also benefits the educators in a few ways. Logging also informs the educator of the trainee’s baseline level of POCUS experience prior to the session in question (either on shift, in the skills lab or at a course). This helps the educators determine a learning plan for the day, or prepare materials for an upcoming session. In the presence of a strong cohort of trainees, educators can alter the introduction to a session and push forward to challenge trainees with new applications and learning opportunities.

Readiness for Assessment

Lastly, tracking scans helps guide Readiness for Assessment for trainees. Let’s take a look at another situation. This time, flying airplanes and helicopters.

In order to get your pilot’s licence in Canada, there are many requirements: grounds school, supervised flight time of at least 45 hours, as well as a written exam. Additionally, there are milestones to achieve along the way: the first solo, a cross country solo, a pre-flight test, and the flight test, the equivalent of a final exam. So, how does the flight school figure out when a student is ready to attempt each of these milestones? Flight schools use logbooks as a general gauge to help them: the first solo happens around 10-20 hours of logged flight time, Cross country between 30 – 50, and the flight test usually after 55 (although the minimum is 45 hours). Along with feedback from their supervised training flights, these thresholds help guide the instructors on when their students may be ready to attempt a milestone.

In POCUS, in order to be proficient, you need both experience and formal assessment. The ACEP EUS policy statement in 2016 states that trainees should complete a benchmark of 25 – 50 reviewed exams for a particular application, and 150-300 EUS exams in total. The Canadian Point of Care Ultrasound Society (CPOCUS) also requires an apprenticeship of 50 supervised scans for each core indication, but to get the certification you need both the scan numbers as well as successfully challenging their exam. The Canadian Association of Emergency Physicians recently updated their EM POCUS Position Statement and they too emphasize the need for both a supervised apprenticeship as well as an summative examination of skills.

But, similar to getting the pilot licence, how do educators estimate when a learner may be ready for their formal assessments?

There is evidence to confirm what is already ingrained in us, that practice is important in becoming proficient in POCUS. A study by Duanmu et al.3 looked at EM resident OSCE scores in comparison to the number of scans they’ve logged. They articulate that a plateau (we’ll call this the 2nd plateau..) begins at around 300 total scans (all core EM applications). From their results, we can extrapolate the number of scans above which most learners are likely to be ready to pass (which is important from a resource allocation perspective since we want to reserve a 3 hour examination for those instances where MOST trainees will be predicted to pass). Thus, more evidence that we can use a scan log as a guide to trigger summative assessment.

We hope we’ve convinced you that logging your scans is important! But the question remains, what is the best way to do so? Image capture for a handful of EM residents is fairly straightforward – but what about 400 medical students!? How does USask do it? That’s part 2 – stay tuned!

MacKenzie Russell & Wayne Choi

Peer Reviewed by Paul Olszynski

References

1. Blehar DJ, Barton B, Gaspari RJ. Learning curves in emergency ultrasound education. Academic Emergency Medicine. 2015 May;22(5):574-82.

2. Anders Ericsson K. Deliberate practice and acquisition of expert performance: a general overview. Academic emergency medicine. 2008 Nov;15(11):988-94.

3. Duanmu Y, Henwood PC, Takhar SS, Chan W, Rempell JS, Liteplo AS, Koskenoja V, Noble VE, Kimberly HH. Correlation of OSCE performance and point-of-care ultrasound scan numbers among a cohort of emergency medicine residents. The Ultrasound Journal. 2019 Dec;11(1):3.

|